China has launched its "social credit system," hoping to increase social trust. But when value is calculated by opaque algorithms using vast amounts of personal data, what will happen to China, and indeed, what might it mean for the world?

China is looking to restore "trust" and "sincerity" in society by making a digital "social credit system." In this kaleidoscopic system of ratings and blacklists, good deeds are met with rewards, and bad deeds with punishments.

China hopes this will cure many current ailments, including corruption in government procurement, a lack of food security, and unequal access to healthcare. But the rest of the world can also learn something from this project; namely, the dangers of governing by grading people.

When Communist-Style Social Control Meets Post-Modern Governance

The compilation of digital files on individuals may be seen as a revival of the principle of the "dang'an," a file containing one’s school, work, and political records. The dang'an has lost a lot of its impact on urban citizens’ lives with the advent of the market economy. But with its aim of sharing data across administrative sectors, the social credit system may bring new life to the device.

The dang'an aside, a key idea behind the social credit system is that economic development is hampered by a lack of "trust" within society. There is a real need in China for reliable financial credit data to make loans and transactions more secure. Thus, the plan for the system puts great emphasis on economic misbehavior, such as failing to pay one’s bills. To develop the system, the People's Bank of China (PBOC) in 2015 allowed eight companies to roll out experimental credit rating services based on their own data.

A key idea behind the social credit system is that economic development is hampered by a lack of "trust" within society.

But, as Martin Chorzempa of the Peterson Institute for International Economics argues, these companies’ rating services, such as Ant Financial's Sesame Credit, go beyond their intended purpose. With business models based on integrated services, the companies see value in combining social media behavior with financial data, to produce an all-encompassing score of "trustworthiness."

From the PBOC’s point of view, the pilots raise regulatory issues on competition and conflict of interest. This is why the central bank has not yet issued formal authorization to these companies, leaving the experimental services in legal limbo.

From a citizen's perspective, this takes the social credit system to a whole new level. In this iteration, a person’s "social trustworthiness" depends on more than just their interactions with public authorities or financial capabilities. Even their personal, intimate behavior is part of the criteria.

Good Social Credit Means Perks

What makes this system particularly effective is that it aligns credit ratings with everyday benefits for citizens. In pilot municipalities like Rongcheng in Shandong province, the social credit system is incorporated into a reorganization of public services. A good credit rating entitles one to discounts on utilities, favorable loan rates, and waivers on deposits for bike rentals. Indeed, residents have apparently enjoyed more safety on the roads, as drivers and pedestrians adhere to rules for fear of losing credit points.

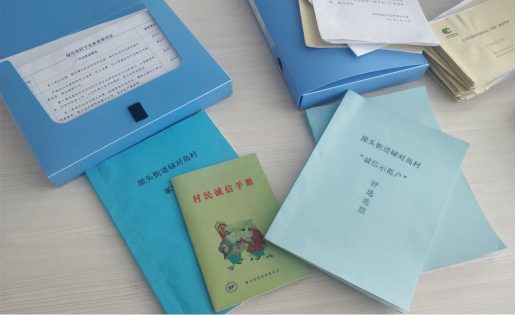

"Social credit" documents in Rongcheng. Photo courtesy of Tom Hancock.

On the other side, people who lose credit face disproportionate punishments. For instance, the Supreme People's Court blacklists individuals for failing to repay their debts and prohibits them from traveling by plane or first-class trains. More importantly, these people’s names and personal details are published for everyone to see on a web portal. This blacklist can be picked up by the credit rating services, creating ripples across different aspects of the subjects' lives, extending to their families and friends.

This is where the social credit system differs from the old, secret dang'an. One's social credit rating is meant to be open to the public. In principle, Sesame Credit is voluntary: customers need to opt in to get a rating. In exchange, it offers a wide range of benefits, such as fast-tracked visa applications and easier car rental. Just like how it is so hard to leave Facebook even after the Cambridge Analytica scandal, the advantages offered by these services make it very costly for users in China to opt out. The subtle yet coercive power of reputation in social networks thus spreads throughout people’s daily lives.

The Pitfalls of Governance by Ratings

According to the official planning outline, the social credit system is intended to "stimulate the development of society and the progress of civilization." But the criteria on which individuals are judged are largely determined by the localities, administrations, and businesses that put pilot programs in place. This means that Chinese citizens are not held to uniform standards—it all depends on where they live and what platforms they use.

In effect, the criteria tend to bundle moral and political values together with laws and regulations. In Rongcheng, a citizen can be rewarded for being a good daughter-in-law or giving to charity. Sesame Credit is said to take into account consuming patterns such as purchasing diapers (a good thing), and entertainment habits such as playing online games (a bad thing). In most cases, credit systems reward very conservative values and conformist behavior. They also tend to discriminate against the poor, by rewarding consumption, and by putting particular emphasis on penalizing "deadbeat borrowers" and those who cannot pay their utility bills on time.

Chinese citizens are not held to uniform standards—it all depends on where they live and what platforms they use.

Citizens have little say in the definition of criteria, despite the pervasive impact the social credit system has on their lives. In Suining county in Jiangsu province, an early pilot program assigned the population to four categories, A to D, according to obscure criteria. It triggered strong opposition from the population and had to be revamped. This case shows that technology doesn't guarantee fairness, nor can it preempt resistance.

Cybersecurity and Technical Issues

And then there are the risks brought about by the technologies themselves, vulnerability to hacking and database breakdown being the most obvious.

In addition, making myriad databases interoperable is an enormous challenge. Even if that could be overcome, there’s still the matter of data accuracy. The input and handling of data are prone to errors. If all social credit databases were linked, erroneous data in any one database would be replicated across different platforms.

The people in charge of these systems find themselves in an unusual position of power. Without strong checks in place, there will be new opportunities for political surveillance, industrial espionage, and even blackmail and bribery.

Governance by Algorithms Requires Democratic Oversight

The all-encompassing scope of the social credit system has incited an endless stream of dystopian commentaries. Observers in other countries wonder whether to copy or fight it. Some worry about possible extraterritorial effects, as foreign entities active in China may be ranked too, and as private companies are increasingly using the same tools to regulate access to public spaces.

The world should acknowledge that data-processing technologies are political devices.

Much of this criticism misses the point. And challenges do remain. Implementing a countrywide social credit system that actually works will be an enormous technical and bureaucratic challenge. Moreover, pervasive surveillance may generate complications that could undermine social stability.

What is sure is that experimentation of this type and scale is unprecedented. The world can learn from China’s social credit system what is at stake when governance is done through data collection and algorithmic ratings. We tend to underestimate how much of this is already going on around the world. For instance, U.S. agencies collect intrusive personal data to allocate and distribute welfare benefits, and they do so using biased algorithms that effectively increase inequalities.

The social credit system should thus be a wake-up call. The world should acknowledge that data-processing technologies are political devices. They must be considered, transparent, and have strong and effective checks and balances.

Check out here for more research and analysis from Asian perspectives.